Why an MCP Foundation is Essential for Industrial AI

When generative AI started moving from enterprise productivity tools into operational technology, the first instinct for many software vendors was understandable: build the AI directly into the product. Embed an AI assistant to refer users to the right Help documentation and ship it as a feature. Further down the line, tools might connect the assistant to the application’s data and let it run free. It’s fast to market, easy to demo, and straightforward to position.

The limitation of that approach becomes clear as organizations move from pilots to production. An embedded AI assistant baked into a single application is only as flexible as that application and creates a new type of data silo. When the enterprise changes its approved AI platform and as security reviews, licensing agreements, and model capabilities evolve, the embedded assistant goes unused or gets bypassed. When the user needs context from another system alongside the industrial data, the embedded assistant usually cannot reach it. And when autonomous workflow agents are built on top of a tightly coupled in-app layer, the architecture has to be rebuilt every time the AI platform underneath it changes.

The more durable investment is not the assistant or the agent. It is the foundation they run on.

Where enterprise AI adoption stands today

Through conversations with industry professionals across process manufacturing and industrial operations, a picture of where enterprises actually stand with AI has emerged and it is more nuanced than the headlines suggest.

The majority of large enterprises have deployed some form of generative AI at the enterprise level. But beneath that headline, three challenges recur with notable consistency. First, many teams are still working to identify the use cases where AI produces reliable, high-value output. The gap between what AI can theoretically do and what it can dependably do in a specific operational context remains significant. Second, response quality varies considerably, and in industrial settings where the cost of a wrong answer can be a deferred maintenance decision or a quality deviation, "good enough" is not sufficient. Third, and most importantly, for how we think about architecture: trust in AI for complex or high-stakes analysis remains limited.

That third point deserves attention. The limitation is not skepticism about AI in general. It is a reasonable response to output that cannot be verified, repeated, or audited. When an AI produces an answer through an opaque reasoning process that lives only in a conversation window, there is no way to validate it, share it, or build upon it. For the kinds of analysis that matter most in process manufacturing like production optimization, waste and emissions reduction, and availability RCA that is a genuine operational constraint.

This is where structure matters. And it is where the MCP foundation changes the equation.

What MCP provides that embedded assistants cannot

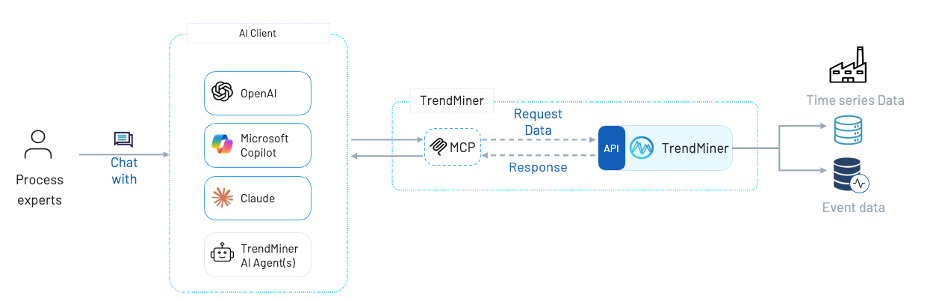

Model Context Protocol is an open standard now maintained under the Linux Foundation's Agentic AI Foundation, with participation from every major AI platform. It defines how AI systems request and receive information from external tools. As a standardized protocol, the MCP server exposes a consistent set of tools and data interfaces regardless of which AI platform is calling them.

That consistency is what makes it a foundation rather than a feature. As shown in the architecture above, a process expert working in their organization's approved LLM platform, whether that is OpenAI, Microsoft Copilot, Claude, or a purpose-built TrendMiner agent, connects through a single MCP layer to TrendMiner's API, which generates insights from its contextualized data layer on top of the raw time-series and event data. The organization does not need to choose between their enterprise AI investment and purpose-built industrial analytics. The two work together, within the client the engineer already uses.

Critically, when the AI landscape evolves, like when a better model becomes available, when the enterprise shifts platforms, when a new autonomous workflow agent architecture emerges, the MCP server does not need to be rebuilt. The tools it exposes remain stable. The industrial analytics layer is separated from the model layer by design. Embedded AI assistants do not have this property: they couple the AI experience directly to the application, which means platform changes require rework at the application level.

This is the architectural advantage that becomes increasingly apparent in conversations with digitalization leaders who have navigated one or two cycles of AI platform transition already.

Structure enables trust and persistent solutions

Returning to the trust challenge: the specific thing that MCP enables, which an AI Client connected to raw data sources typically does not, is persistence, repeatability, and the ability to write back.

When an analysis is run through TrendMiner's tools via MCP, the underlying analytical work, like the batch comparison and event detection, can be carried out through vetted tools in TrendMiner, in conjunction with outside knowledge. But the architecture goes further than read-only retrieval. The LLM can also write back to TrendMiner: creating monitors, saving analyses and dashboards, and logging context items based on what it has found. An autonomous workflow agent that identifies an anomaly pattern can set up a monitor to watch for that pattern going forward without the engineer having to step back into the application manually to configure it.

This moves AI from observer to participant in the operational workflow. The AI synthesizes and communicates; and then it acts. Those actions persist in TrendMiner's enhanced data layer, not in a conversation log that disappears at the end of a session.

For the use cases where trust is lowest and stakes are highest, like diagnosing the cause of a quality deviation, assessing whether a pressure pattern indicates an emerging failure, this distinction is significant. An engineer who can point to a saved monitor, a logged context item, or a documented analysis within TrendMiner can trust the repeatability of the values and conclusions drawn. The alternative to replication is utilizing the same prompt, hoping that the same considerations and operations are carried out within the AI-black box.

Repeatability, auditability, and persistent action are not peripheral benefits. In process manufacturing, they are the prerequisites for AI output to influence decisions at scale.

The efficiency case

Beyond the architectural and trust arguments, there is a straightforward efficiency case for the MCP approach that comes through consistently in customer conversations.

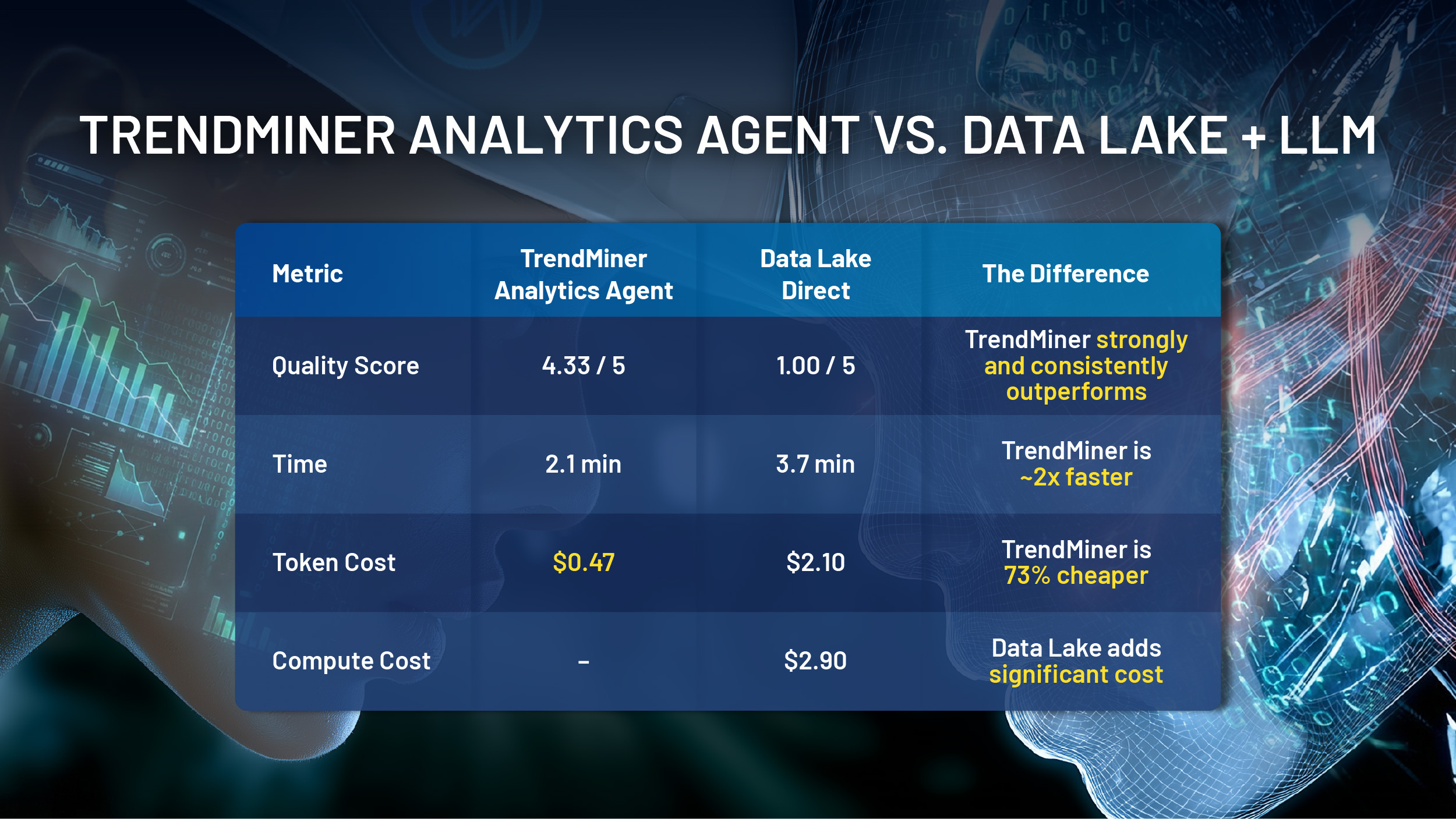

Purpose-built industrial tools, specialized in time series and contextual analytics, invoked through the MCP layer, handle the domain-specific analytical work before the model performs its reasoning. The model receives contextualized, processed output rather than raw data it must interpret independently. This reduces token consumption and therefore cost, while improving the relevance of what the model works with. It also reduces the time from question to useful answer, because the analytical heavy lifting has already been done by tools designed specifically for the task.

The contrast with a direct data lake connection is instructive here. Connecting an LLM directly to raw historian data is technically possible, but it produces a high-cost, low-precision result: large volumes of unstructured time-series reaching a model that has no domain-specific framework for interpreting it. The MCP approach inverts this; the domain expertise lives in the tools, and the model reasons the output of those tools.

Building for a landscape that will keep changing

The AI platforms, models, and autonomous workflow agent architectures available today will look different in two years. That is not a prediction. It is a description of what has already happened over the past two years.

What we hear from the organizations thinking most carefully about their industrial AI investments is a consistent instinct: do not couple the operational data layer to any single model, platform, or embedded vendor. Build the industrial foundation in a way that works with whatever AI infrastructure the organization adopts going forward.

An MCP foundation is that bet. It is not a feature that needs to be rebuilt when the AI landscape shifts. It is the stable layer between operational data and AI reasoning — one that keeps pace with a rapidly evolving environment precisely because it is not dependent on any one part of it.

The embedded AI assistant is a product decision. The MCP foundation is an infrastructure decision. For organizations planning beyond the next eighteen months, the distinction matters.